#Splunk props conf free

I hope this tip was helpful and obviously feel free to drop any question in the comments. Then the IDS sourcetype stanza in the nf will do its thing and problem solved !

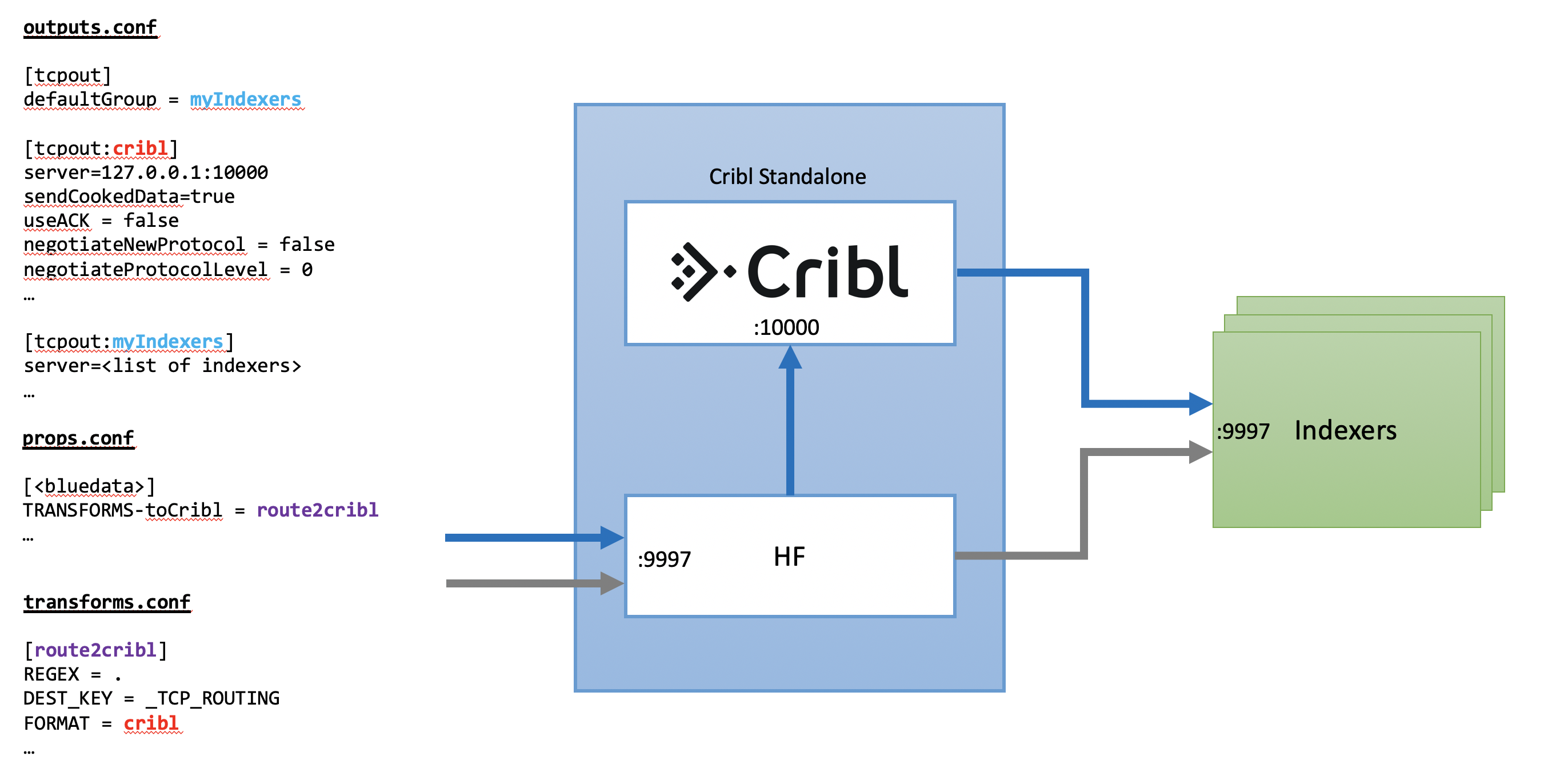

the forwarder itself,listening on another port. The basic ideas is to have those IDS event, after being assigned with the proper sourcetype, go through the syslog routing where the server is. Depending on your own site practices, you might perform additional configuration, such as assigning different source types, routing events to different indexes, or using secure TCP. So here is the solution I've found to create a loopback that will make the IDS events go back through the pipeline and have the time zone properly adjusted. The following Splunk configuration stanzas define a minimal basic configuration for streaming JSON Lines over TCP: one stanza in nf, and one in nf. nf is commonly used for: Configuring line breaking for multi-line events. Version 8.1.0 This file contains possible setting/value pairs for configuring Splunk softwares processing properties through nf. Just adding the new IDS sourcetype stanza in nf wouldn't work because normally splunk goes once through the pipeline and wouldn't get back to the Typing pipeline after first changing the sourcetype key to the IDS key. The following are the spec and example files for nf. However in this case, to make things worse, the events included a unique IDS log with a different time zone than my locale and without any identification in the time stamp so the splunk time interpreter took the time as it is without adjusting it to UTC. A fairly standard procedure up to this point. Recently I had to improve the data quality of a source that is feeding my splunk instance with various security events over a single port.Ī major part of the process I'm usually following is breaking the events into different source types using regex. Conf20 session was already recorded, you might want to consider the below as an addendum since it is inline with the session topic and the motivation to spend hours finding a solution stem from the same problem statement: What to do if you have very little or no control over the data source ? For this example, everything I’m creating is very fabricated, just so it’s easy to identify in the logs.Since my. I’ll start by navigating Settings -> Fields -> Field Aliases to make a new field alias.

Let’s start by making a simple change in SplunkWeb to observe how it is logged. Let’s explore a few changes and how they’re logged in this file and in Splunk. Indexing for this log is enabled by default in Splunk 9.0. The configuration_change.log file is stored in the default Splunk log directory, $SPLUNK_HOME/var/log/splunk.

#Splunk props conf update

While this feature is enabled by default, you may need to update your indexes configuration to ensure that the _configtracker index is stored on the correct volume when you upgrade. To implement the change logging feature, Splunk 9.0 introduces a new log file, configuration_change.log, and a new index, _configtracker. Let’s take a look at how this new feature works! Overview The timestamp field in csv file is the below format. With the introduction of Splunk Enterprise 9.0, a new feature has been introduced for configuration change tracking. I got the data into Splunk but it is not breaking correctly.I initially done a testing through Web interface and it breaks correctly but does not break correctly through monitor stanza. One of the most requested features in Splunk has been better audit logging for changes.